AI Gets Neanderthal Facts Wrong, Study Warns

Generative AI answers questions instantly. It can describe prehistoric life or check your heart rate. However, the reliability of those answers is shaky. Researchers at the University of Maine found a big issue. AI often reproduces outdated scientific views. This is especially true for Neanderthal.

How the Study Worked

Professor Matthew Magnani and his team tested two chatbots. They asked for images and descriptions of Neanderthal daily life. Some prompts asked for scientific accuracy. Others did not.The results were troubling. The AI used information from over a century ago.

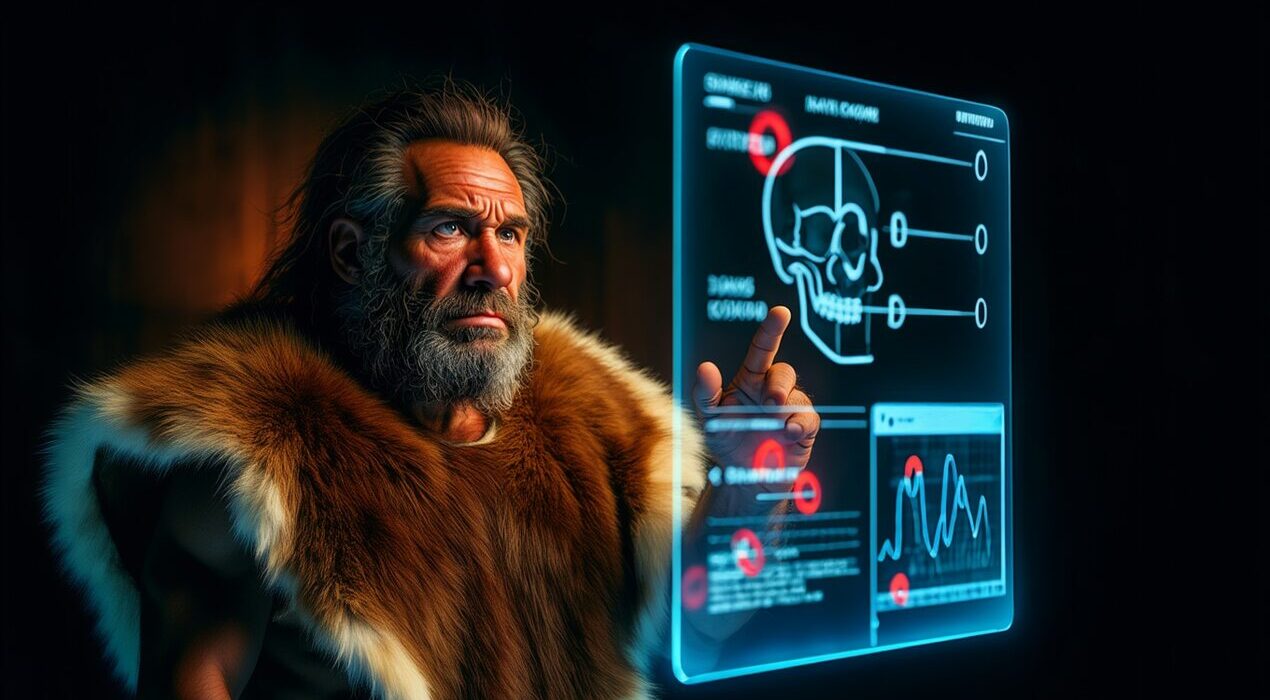

Outdated Images and Stories

The generated images showed Neanderthals as primitive and ape-like. They had hunched postures and exaggerated body hair. In addition, the pictures excluded women and children. The written narratives were also flawed. About half of ChatGPT’s text did not match modern science. For one prompt, that number exceeded eighty percent.Therefore, AI systems spread old biases. They also added anachronistic details like glass and metal.

Why This Happens

The team traced the AI’s sources. ChatGPT relied on research from the 1960s. DALL-E 3 used material from the late 1980s. “It’s important to examine biases in everyday AI use,” says Magnani. He adds that quick answers may not be accurate.

A Call for Better Data

Open access to modern research could help. Future policies will shape how AI understands history. Teaching students to use AI cautiously is also key. As a result, we can build a more critical and informed society.